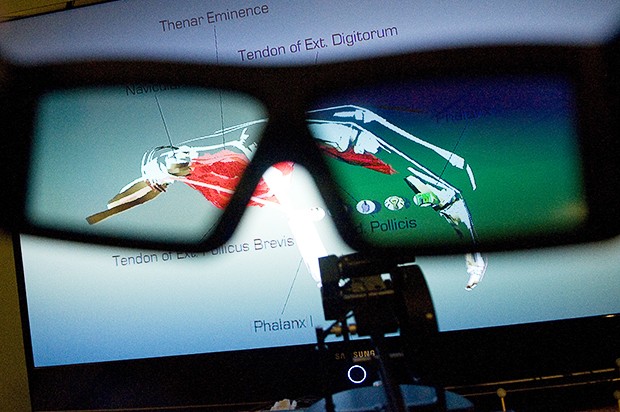

Maybe itâÄôs the novelty of drawing on air. Perhaps itâÄôs the variety that comes with collaborative work, which keeps him exposed to other research areas. Or, it could be the way visualizing and interacting with scientific data in virtual reality combines a background in art âÄî and a way to be creative âÄî with the technical sophistication of computers. In any case, assistant computer science professor Daniel Keefe describes a lot of what heâÄôs been up to since arriving at the University of Minnesota last summer as âÄúfun.âÄù In KeefeâÄôs interactive visualization lab, a set of 3D glasses, a large television screen and a device called a phantom, which connects a âÄúpenâÄù to a computer, allows users to draw in the air and see their work in three dimensions. Keefe aims to create virtual reality environments to help researchers visualize and interact with data. Virtual reality requires two things: a 3D view and a tracker (in KeefeâÄôs lab, itâÄôs attached to the 3D glasses) that tells the computer where the userâÄôs head is so it can adjust the view accordingly. For example, if the user ducks under the object, theyâÄôll see its underside. The idea is that being able to view data or models intuitively would lead to a better design process for things like medical devices. âÄúWeâÄôve learned since we were born how to interact with the real world,âÄù he said. âÄúTypically on a computer, you canâÄôt do that âÄî but in virtual reality, you could.âÄù Mechanical engineering professor Art Erdman directs the UniversityâÄôs Medical Devices Center , and said his group in mechanical engineering has been working for the past two years on creating a virtual reality design environment. When Keefe came to the University, his work fit right in. âÄúI said, âÄòwhoa, this is just the person we need to work with,âÄô âÄù Erdman said. âÄúIâÄôm just absolutely thrilled.âÄù Keefe said that so far, their collaboration has involved mostly brainstorming, but they envision creating a virtual, interactive environment that would show an organ like the heart in combination with the medical device to be used with it. The idea âÄî and challenge âÄî is to show many features of the heart, like blood-flow paths and pressure on heart tissue walls, along with a mechanical device, in a single environment, Keefe said. Such a tool would be used between the âÄúbench-testingâÄù stage, which involves building a prototype and mechanically testing it, and animal or clinical trials, Erdman said. With it, researchers could visually check whether the device is working right, get feedback on how it affects things like blood flow in the heart and tweak its design, Erdman said. Another challenge, Keefe said, is figuring out how to best enable design in a virtual reality environment. âÄúWeâÄôve got both our hands to work with, but we donâÄôt know what to do with them,âÄù he said. So a big question is how best to interact with the computer, to give it input thatâÄôs just as spatial as its 3D output. But the right ways to do that are not yet defined, he said. Now, his interactive lab allows people to draw lines âÄî but he wants them to be able to specify surfaces and use data to describe shapes or âÄúclean upâÄù drawings. For example, designers could create rough drawings of a heart valve device and then have the computer reinterpret it to fit heart valve size data. Erdman said local industry is very interested in virtual reality visualization for device design. âÄúI think the people whoâÄôve looked at this would agree that this is going to be the future,âÄù he said. One long-term goal is to use data from individualsâÄô MRI scans to personalize medical devices, Keefe said. But today, these goals are just ideas. Right now, Keefe said, commercial medical device design software is based mainly on what people have in their offices, which is usually a computer and a mouse. âÄúWeâÄôd like to change that,âÄù he said. âÄúWe think better design could be done if you instead had a virtual reality setup.âÄù With this semesterâÄôs new interactive scientific visualization class, heâÄôs also exposing his students to visualization problems based on the needs of researchers in other fields. Students each made research proposals involving visualization that could benefit a researcher in another field, and some of the proposals will be chosen for students to work on throughout the rest of the semester. The concept mimics the way proposals are made and handled by government funding agencies, computer science graduate first-year student Vamsi Konchada said. Konchada is collaborating with a University archaeologist to analyze features of ancient stone tools, using 3D laser scans, to determine how they were made. Currently, there is no automated procedure for doing this, so it is done manually, Konchada said. Computer science graduate student Danny Rado said he will be working on a project aimed at improving game-based exercise visualization (like the Wii Fit ) so it could be used for physical therapy. Right now, Wii games can be used for physical therapy only in the clinic, because the game doesnâÄôt provide enough feedback for the patient to ensure theyâÄôre making the right movements. So the goal of the project is to visualize the appropriate motion and give users the feedback they need to mimic it.

Prof brings new dimensions to data

Daniel Keefe, a new University faculty member, looks to use visualization to help other researchers.

Image by Stephen Maturen

A look through the 3D glasses shows a model of a hand’s skeletal and muscle structure. Keefe hopes the technology can someday be used in the medical field for training.

Published March 9, 2009

0